SubT Virtual Urban Circuit

Robotika Team

In parallel with System Track Urban Circuit starts also Virtual Track. This time we will keep two separate threads/blogs although they are connected — one team Robotika. While System Track is still "big unknown" for Virtual Track is already qualification world prepared, so let's have a look … Update: 5/3/2020 — Virtual Urban Worlds

Information from

community

forum (registration required):

Qualification for the Virtual Urban Circuit will require submitting a docker

solution against the Urban Circuit Qualification scenario through the Tech Repo

(https://subtchallenge.world/compete). In order to qualify, a team will

need to submit a docker solution that is able to successfully report at least

five of the artifacts within one hour of simulation time. Teams may submit

against the Urban Qualification scenario as many times as they choose until the

deadline for the Virtual Urban Circuit qualification, January 3, 2020

AoE.

Tunnel Circuit Virtual Teams,

We hope your fall is going well. This is a notice that your team has received

a Team Qualification waiver for the Urban Circuit of the Virtual Competition

based on your successful participation at the Tunnel Circuit event.

However, please stay tuned for the upcoming release of an updated

Qualification Guide. We do plan to release additional guidance related to team

registration and additional requirements.

We look forward to your continued participation in the SubT Challenge!

Content

- Virtual Urban Circuit Environment Preview

- Ver31 - AWS logging

- Ver33 - First point on AWS, Urban Practice 1

- CloudSim final release, new robot configurations and Ver36 with depth data

- Urban qualification is closed now

- Virtual Urban Circuit Qualification Results

- Vent, Ver48, RTAP-Map and Urban submission window

- The Day Before Last Day

- Robotika final submission

- Virtual SubT Urban final results

- Virtual Urban Worlds

10th October 2019 — Virtual Urban Circuit Environment Preview

Here is our first test in Simple Urban 01 world with ver25 from

Tunnel Circuit with exploration set to 120s:

https://youtu.be/FJ95uL_KYq4. PavelJ is already working on new

configuration for robot X2 with lidar and RGBD camera, which looks

promising: https://youtu.be/e-0GVTE35BA. We are currently waiting for

official documentation on

how

to add new configuration.

2nd December 2019 — Ver31 - AWS logging

OK, it is time for some updates …

- Robotika sent Letter of Intent to Participate in both System and Virtual Track of Urban Challenge (confirmed on Nov 22nd).

- there is Ver31 which is now successfully storing OSGAR logfile via /robot_data topic (200670743174.dkr.ecr.us-east-1.amazonaws.com/subt/robotika:ver31)

- the code is merged into master.

Any details? Well, there are some … for example we are not sure when it is

guaranteed that the ROS bag recorder is ready

(#276)?

Also the version on AWS is more than 2 weeks old

(#271).

No, the version numbering is not available (yet?)

(#256),

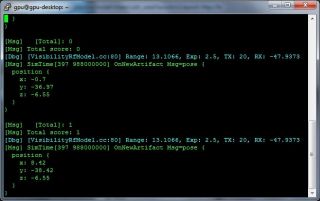

so the only hint is output from simulation:

How we can tell? Well the entrance should be to the right so that robot X

coordinates on staging area are negative. This was fixed and docker images are

available, but not on AWS.

As a side-effect we found a bug:

Local planner selected as next goal (nearest to the goal) position behind the

wall and end-up in local minima. Forever.

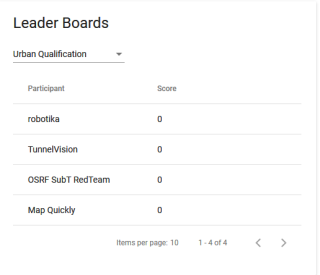

Just for reference here is the leader board:

Simply the leader board for Urban worlds is not working yet … maybe

tomorrow? It is deadline for teams registration for System Track and only one

month is left to Virtual Track (Jan 3rd 2020).

p.s. note Deleting Pods With no errors — I guess that this is result of

huge logfiles. As a test I tried simulation of 5 robots of different types.

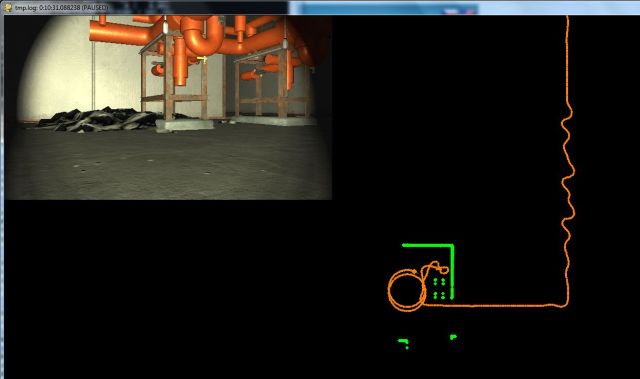

7th December 2019 — Ver33 - First point on AWS, Urban Practice 1

OK, we have now one point in Urban Practice 1 testing simulated

environment.

It is backpack and in order to find it there were needed three

changes:

- disable collision detection — it simply does not work and crossing the entry gate causes several g reading. Moreover it looks like the organizers do not plan to fix this (see #243).

- slow down turn from 45deg/s to 20deg/s — basically the same topic as above but the problem is odometry on X1 (large, Husky like) robot

- classify all red objects as Backpack — in Urban Circuit is no Drill or Fire Extinguisher and new objects Vent and Gas are not red.

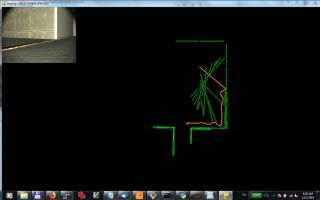

Here is the map from local run:

And meantime AWS simulation was completed with HD camera (X2 config 4), so here

is nicer video what robot discovered.

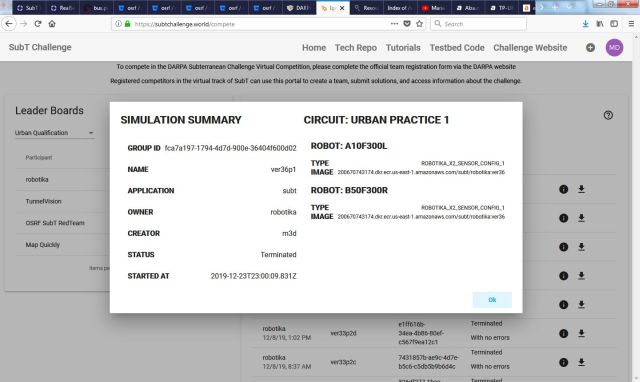

25th December 2019 — CloudSim final release, new robot configurations and Ver36 with depth data

There are some news since the last blog update, in particular new CloudSim

version with new robot configurations are available now on AWS — see

SubT

Release Notes & Updates (2019-12-20). Note, that this should be the final

release for Urban Circuit, no more

modifications a

day before the competition end. We will see!

There is one close deadline: Qualification ends on Jan 3, 2020, i.e. in 9

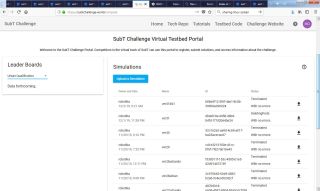

days. If you check the scoreboard

Urban Qualification then you can tell that new teams will have a hard time!

Robotika is „leading” with zero points at the moment and you can see

two new teams TunnelVision and Map Quickly. The team OSRF SubT

RedTeam I believe is just testing team of the organizers and it will later

disappear. If you want to qualify you have to score 5 points, and in particular

you have to be able to navigate on the railway to next stations where are

artifacts hidden. Definitely not easy task. The old teams from Virtual

Tunnel Circuit have qualification waiver so that is the reason they do not

worry much … (yeah, including robotika, but … if you are not able to

qualify how you want to win?!).

The new logging system seems to be working fine, so I was able to download logs

from two robots A10F300L and B50F300R,

200670743174.dkr.ecr.us-east-1.amazonaws.com/subt/robotika:ver36. BTW I

like the new info feature

The results were not great. I am not able to extract log for robot A at the

moment, but that could be an issue on my side (work in progress). And what

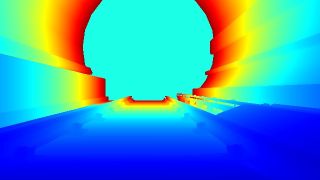

about B robot? See some pictures from RGBD camera with 640x360 resolution (BTW

that was another „lost case”, because this sensor should simulate

RealSense2, see

#295,

but Resistance is useless,

sigh).

The robot detected a problem on the stones:

0:09:06.926151 Pitch limit triggered termination: (pitch 26.5) 0:09:07.041879 maximum delay overshot: 0:00:00.115707 0:09:06.926151 Microstep HOME 0 248.373 pitch_limit 0:09:06.926151 go_straight -0.3 (speed: 1.0) (-67.239, -76.255, 0.105)

… backed-up 30cm and tried second side to exit this suspicious area. It

worked, but … then it ended in endless loop:

… until it decided that it is time to go home.

To be continued.

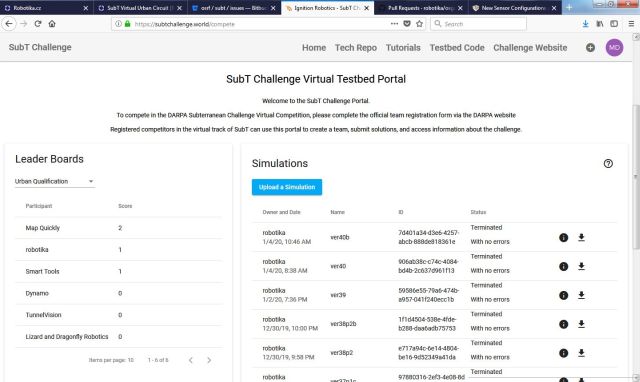

5th January 2020 — Urban qualification is closed now

Yesterday (Jan 3, 2020, AoE, i.e. noon Central European Time Jan 4) was

deadline for Virtual Urban Circuit Qualification. And the result? See

Urban Qualification Leader Board:

In short it is not good for new teams: No team reached limit 5 points! There

was a request to extend the deadline or lower the threshold on the

community

forum (restricted access) and the DARPA answer was:

The Virtual Urban Circuit qualification deadline and threshold will remain as

outlined in the SubT Qualification Guide. We understand the challenges of

autonomous navigation and artifact detection in these environments are

difficult and require significant development time. You are encouraged to

continue developing and submitting your solution to the Urban Qualification

world and note there are more opportunities to compete in the Cave Circuit and

the Final Event.

I agree that it is very hard to get some points in Urban Qualification

world — see SubT Virtual Urban Qualification -

1st point/video. The point was collected by big wheeled platform X1 with QVGA

camera (320x240) and 5 meters range lidar, the weakest sensor configuration.

The motivation for X1 was large wheels so that it can overcome the bolts

holding the rails. Note bad orientation on the rail, probably caused by the

robot tilt (I hope). Also note, that the robot had to go all the way to the

next station to be able to turn — it is not possible to do it safely on the

railway. Yes at the final stage, when the robot is supposed to navigate on its

own path, it failed too. The robot did not climb back to the platform.

Nevertheless robot reported the artifact and the wall to base station was not

too big so it scored 1 point!

The next attempts were with ROBOTIKA_X2_SENSOR_CONFIG_1 configuration

(robot X2 with RGBD camera 640x360). Here the robot has to enter the rails very

precisely to be able to explore it.

BTW robot X2 has sometimes problems to even turn on the platform — the wheels

are narrow and they can get stuck in small gaps.

There were several versions since my last report and I did not properly document it :-(

- Ver37 — experiment with depth2scan (conversion from RGBD image to be part of the lidar scan), optimization of Local Planner (it was too slow for this combination)

- Ver38 — navigation along a trace (polyline) to explore the station platform. Cell phone detection. Scored 1 point in Practice2/video. Collected some data how to fall down from platform/video.

- Ver39 — navigation down to the rails, scored one point in qualification world. Collected data of railway.

- Ver40 — simulate slope lidar from RGBD depth image. Detected discontinuity and enhanced lidar scan by detected rails. Targeted to small X2 robot.

The final run was with five X2 robots and there was no happy end:

Well, we (robotika) are qualified thanks to waiver from Tunnel Circuit

but there is a lot of work needed to successfully score in 25 remaining days to final

submission!

8th January 2020 — Virtual Urban Circuit Qualification Results

OK, now is the qualification disaster

official

(restricted access):

Congratulations to the 8 teams who qualified for Virtual Urban Circuit! These

teams provided narratives and had received score waivers for successfully

submitting qualifying solutions to Tunnel Circuit. The teams competing in

Virtual Urban Circuit are:

- AAUNO

- BARCS

- COLLEMBOLA

- Coordinated Robotics

- CYNET.ai

- Flying Fitches

- Robotika

- SODIUM-24 Robotics

We appreciate the new members of the SubT Challenge community who made

significant progress with their solutions to the Virtual Urban Qualification

world. We hope you will continue development to qualify for Virtual Cave

Circuit and the Final Event.

Also the list of teams is now

up-to-date (it seems to me that there are no new teams in both tracks).

25th January 2020 — Vent, Ver48, RTAP-Map and Urban submission window

OK, it is time for a short report and check where are we now? I would

start with review of new robotika releases:

- Ver41 — navigate first part via Trace for more reliable gate entering

- Ver42 — limit size of ROS bag to 2GB (simulation limit for user logfiles) so that we do not lose the beginning of the recording, depth2scan (detect large changes as obstacle)

- Ver43 — reduce reporting false artifacts as Backpack (more strict criteria)

- Ver44 — integrate Gas detection and reporting as artifact, use for app.pose2d global (unified) coordinate system

- Ver45 — integrate vertical_scan from depth camera

- Ver46 — parameter tuning for depth2danger to avoid wooden pallet for example

- Ver47 — integrate depth2dist much more dense RGBD camera filtering

- Ver48 — implement and integrate down-drops detection

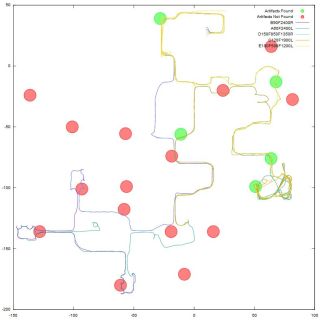

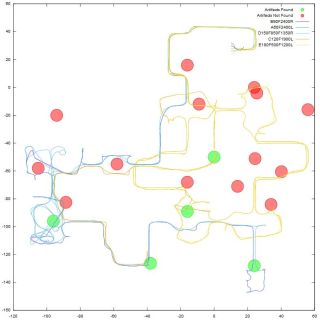

Here you can see collected map all all five robots testing Ver47 in Urban

Practice 3. You can notice several „details” like that some robots returned

and navigated around start area (it was robot A for couple of times), sometimes

the position is shifted (i.e. wrong) due to lost odometry readings on heap of

stones or a wooden pallet, some robots ended in infinite (or almost infinite)

cycles, and some lost position (probably stuck on some obstacle, or they ended

up-side-down).

Note, that this overview picture is quite useful for analysis. Imagine 5

robots exploring the world for 1 hour. In total around 10GB of logfiles to be

reviewed for changes and errors. Believe me — it is quite time consuming task!

If you would like to try it use osgar.tools.log2map which now accepts also

multiple logfiles (the motivation to have unified global coordinate system) and

can also display positions of detected artifacts (white dots).

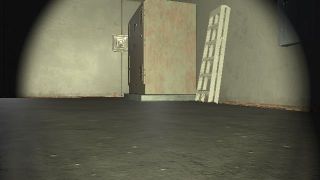

Below you can see some hard to handle obstacles with planar lidar only and

Vent which we have seen for the first time:

So what is the plan with RTAP-Map

(Real-Time Appearance-Based Mapping)? At the moment we are developing two

solutions in parallel, where one is based on

OSGAR only while the other should be

hybrid combining also ROS packages. The plan for the last few remaining days (5

to the final submission) is to use RTAB-Map to independently check robot

location and use it for recovery in the case of failure … and if I did not

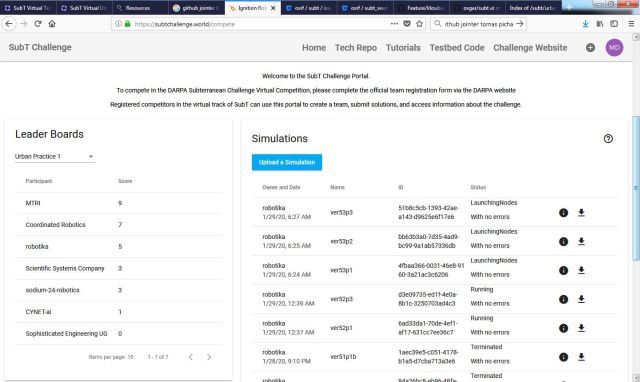

stress it enough, the submission windows is opened now! … see

https://subtchallenge.world/compete

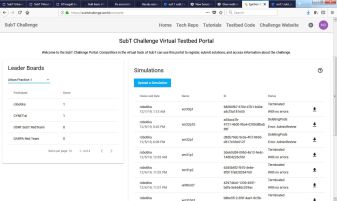

29th January 2020 — The Day Before Last Day

There is one day left to the end of submission window and it is quite crazy

again. Exhausted, sleepy, tired …

There are few bright moments like when ver51p1b scored 5 points, but there

are many dark times like that CloudSim does not work at all and robot is

not receiving data (b is actually repeated run, where the first totally

failed — robot hit the wall(no lidar data) or did not start (no data) or

was not able leave start area (no IMU data). Really crazy. See issue

#261.

And this is how the score board looked this morning:

… but it is different now and all 8 competitors are uploading their latest

solutions for test.

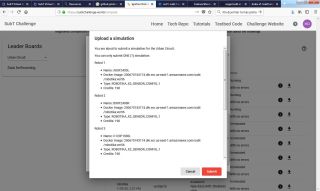

31st January 2020 — Robotika final submission

It is 5 hours to AoE deadline and Robotika final solution is submitted now.

Thanks God! It was as bad as last time and much worse … but we are getting

used to it, so DO NOT PANIC!

As final report I would say that we did not learn. Since Virtual Tunnel

Circuit we know, that closer to the submission deadline are, the harder it is

to test on AWS anything. And the same happened again. On the other hand the

development peak was definitely this last week, so what is the solution to this

riddle?! It started with

CloudSim

stops sending some topics (again) — shortly some robots did not get any

LIDAR scan data for example, or IMU. They did not start, or hit the wall

shortly after the start because they had no idea, there is a wall in front of

them. At that point it no longer had sense to trust AWS CloudSim.

But that was not the end. As the machines were more and more overloaded

Sophisticated Engineering reported

Problems

with Cloudsim. The simulations did not start, or failed (Terminated with

errors) or took hours (days?) and then failed.

Can this be worse? Yes, it can. The Unknown Error caused that competitors

were no longer able to submit anything. Not just testing solution but also

finals. This was the state since midnight till at least 2am, when I went to bed

to re-try now, in the morning. The deadline is AoE (Anywhere on Earth) so till

12 hours the day after in Central European Time. Nate wrote me: The server

was down for a brief period of time. Sorry about that. Everything is back to

normal. Please try again whenever you are ready..

A now it worked:

And here are the data:

A60F2400L B90F2400R C120F1900L D150F850F1350R E180F500F1200L 200670743174.dkr.ecr.us-east-1.amazonaws.com/subt/robotika:ver56 ROBOTIKA_X2_SENSOR_CONFIG_1

There are five robotika robots with version Ver56. Robots A and B take the

risk and try to explore the whole world for 40 minutes. It is risky, because

there is only one hour for the competition, so they have to manage to return in

20 minutes if everything goes fine and they do not fall from elevator vertical

shaft, for example. Then robot C tries normal exploration and should be

able to return in time (again, if everything goes well). Robots D and E are

house keeping robots and after some shorter time they return home and check

if everything is OK. Yes and hopefully they report some artifacts too …

Ver56 what is it? First of all it is (again) version which was never

running on AWS. Just could not. Not even Ver55, which contains refactoring

with ROS interface! Yes, no kidding, 24 hours to final submission we changed

core C++ code used to communicate from OSGAR to ROS master. The motivation was

loosing sensor data when the system is overloaded, but would you do this in

normal production? Definitely NO!

What else? There are final tune-ups from Jirka not to fall from stairs while

still navigate narrow corridors and climb steep ramps. The wall distance

was set to 80cm at the end.

Finally there were major changes in artifact detection. Full RGBD (image and

depth) data were used and besides estimation of artifacts via colors they were

re-filtered by clip in distance plane (expected median distance +/- 20cm) and

majority of pixels should remain. The last strike was filtering by object

hight, mainly to filter out other red robots and not to report them as

Backpack.

Sigh, fingers crossed! Now there will be 3 weeks pause with no indication what

is going on, so do not ask.

p.s. Thanks Jirka, PavelJ and Zbyněk for extraordinary support!

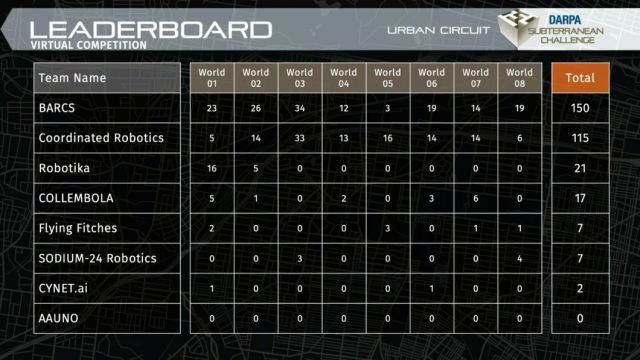

27th February 2020 — Virtual SubT Urban final results

- SubTv - Urban Circuit Awards Ceremony & Technical Interchange Meeting - DARPA Subterranean Challenge

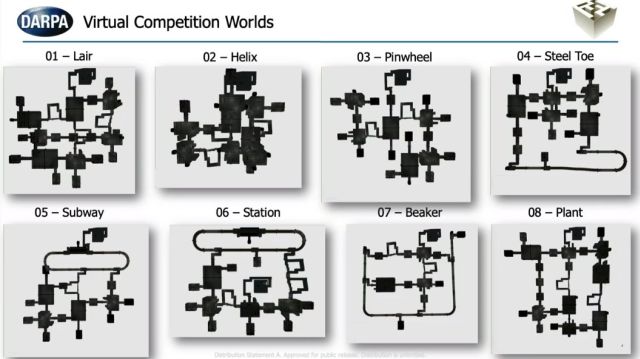

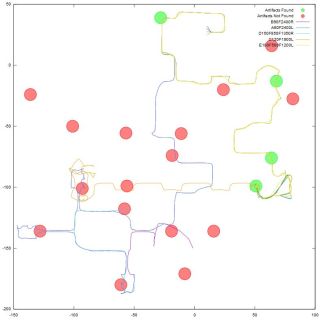

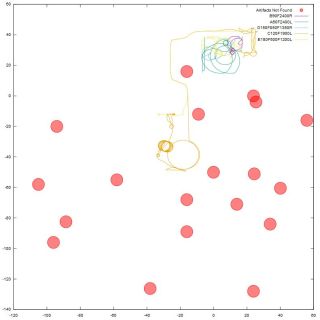

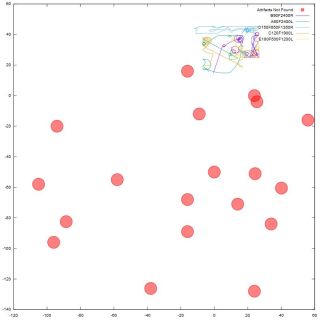

5th March 2020 — Virtual Urban Worlds

I do not have enough space left on my hard drive but with the new circuit there

is also a new feature robot_paths.svg! There is no long need to extract

paths of all robots — we get it for free + position of correctly reported and

not reported artifacts.

But that is approximately all for good news. When I looked at the first 4 runs

it looked nice:

… but then there were 20 failing (totally) runs:

It is true that we were probably on the edge of resources … yeah, we should

and we are happy with the 3rd place we got!